Background #

When working on the Coles Promotion Manager app, my team was asked to create a new feature for users to plan and manage a new type of promotion. This new type would combine items from different categories, like a meal deal with a soup, main course, and dessert.

We were given the opportunity and mandate to design the new feature to be accessible from the start. I was excited to begin laying the foundations for a proactive approach, rather than finding and fixing accessibility issues after building features.

Problem #

Our team was new to building and testing with accessibility requirements baked in from the start. We needed a clear and actionable approach to create a new feature that meets accessibility standards without overly taxing team velocity.

Solution #

I chose to use the Accessibility Acceptance Criteria (AAC) whenever possible, rather than referring to the complex and wordy Web Content Accessibility Guidelines (WCAG).

What are Accessibility Acceptance Criteria?

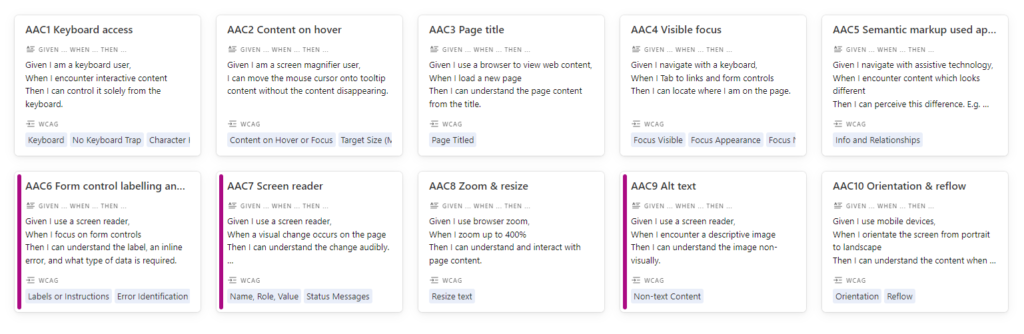

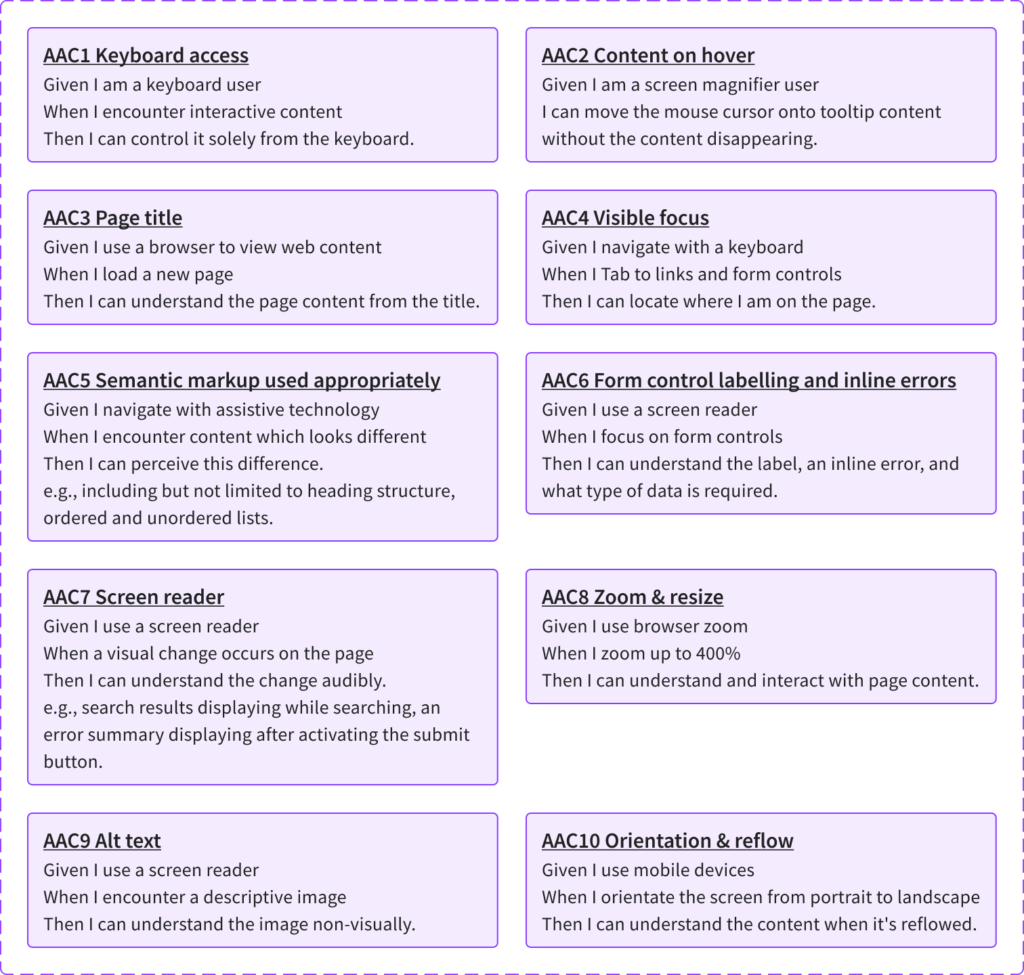

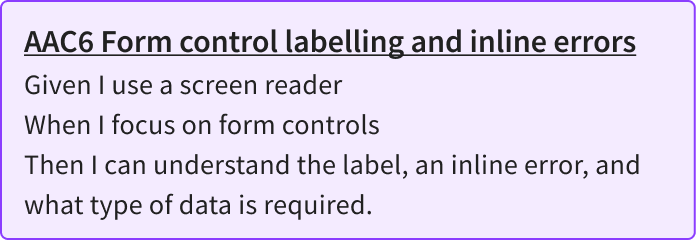

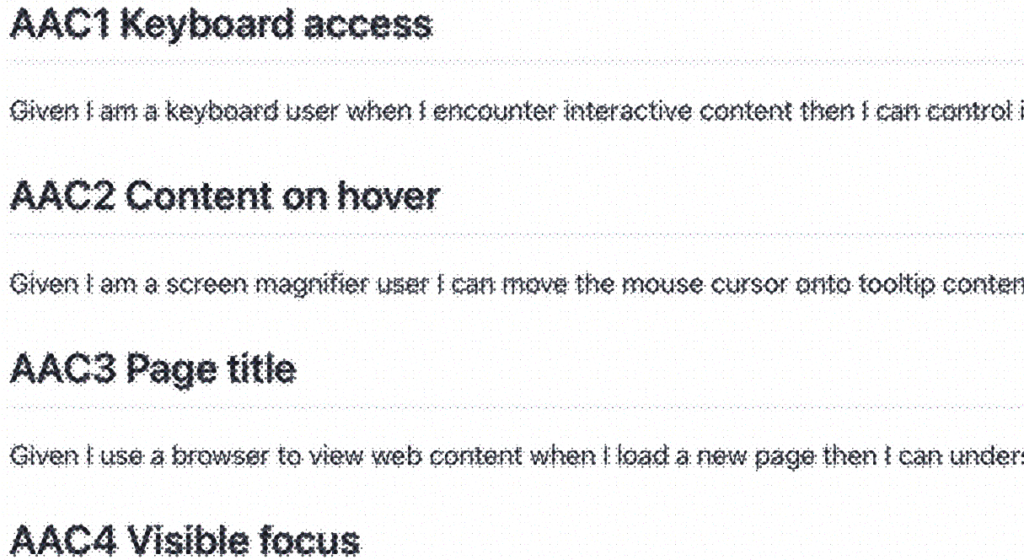

AACs are broad accessibility criteria applied when user stories are created. Instead of developer-focused, they’re BA-focused. And describe key behaviours the finished feature needs to display – an outcome, but they don’t go so far as in specifying how to do it.

Instead, they act as guard rails allowing a developer to implement the feature in any possible way, if the outcome is met.

They define the boundaries of a user story and are used to confirm when a story is complete and working as intended. They’re written in plain language and easily understood by members of a team who have different expertise and varying levels of fluency in each other’s technical jargon.

Accessibility Acceptance Criteria

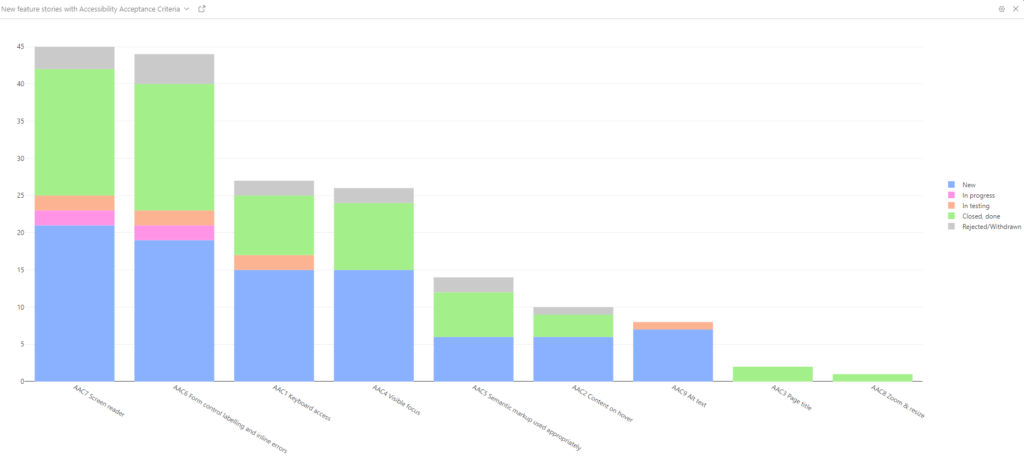

The 10 AAC are a shortlist of frequently needed accessibility requirements to help teams design, build and test inclusively from the beginning. With a shift left approach, we can avoid much of the costly work of fixing accessibility issues after they are in production.

I mapped each of the 10 AACs to one or more WCAG success criteria to know when we could use the corresponding AAC.

AAC in design annotations #

Next, I created an AAC annotation component to efficiently annotate design mock-ups.

Then I added relevant AAC annotations in a margin comments area alongside a design mock-up.

Adding AAC to stories #

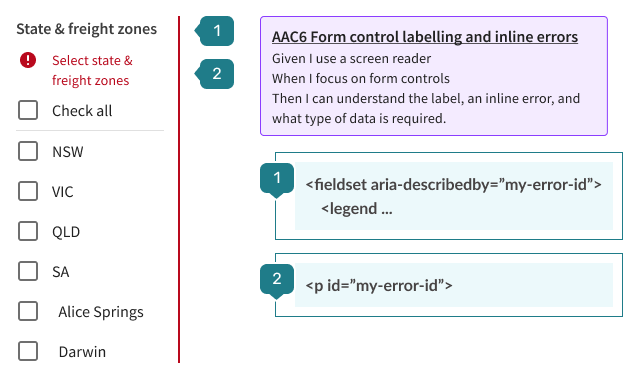

I worked together with the business analysts to add AAC to stories.

To speed up learning in the early phases, I added checklist items directly to stories. For example, AAC6 has a checklist item for errors:

Does the screenreader announce each form control error?

- Trigger form validation errors. Open NVDA screenreader.

- Use

TabandShift+Tabto navigate through form controls. - Check the form control error is announced by NVDA when focused on the form control.

This made our definition of done clear to everyone in the squad.

AAC in development #

When front-end components needed to be refactored or built from scratch, I collaborated with the tech lead and developers. Using AAC to guide conversations helped us achieve semantic HTML elements and attributes that met the desired outcomes.

Quality assurance testing with AAC #

For quality assurance testers, I ran coaching workshops on testing with AAC. Each AAC includes one or more checklist items written in plain language, which makes them easy for testers to pick up. This was critical to ensure that new features were implemented as intended. I provided extra support for testing that involved using a screen reader.

My tips for screen reader testing in Agile product processes:

- Learn how to stop the screen reader.

- Increase the estimate of story points to allow time to learn how to operate the screen reader.

- Increase the estimate of points for all stories that involve screen reader testing. By nature of needing to listen, it is more time-intensive than other manual inspections.

- Display the speech output as text.

Results #

The entire team’s capability was uplifted throughout the process. The team can now design, build and test web experiences with accessibility from the start. Using AAC from end to end provided a fast way to build the feature and meet accessibility requirements. Additionally, the team were enlightened and able to sustain their new way of working.

When one personally sees accessibility as the right thing to do and the smart thing to do, they are motivated for good. They will see the powerful benefits that accessibility provides to end users generally and to the design/development process – better design, cleaner semantic code, higher search engine rankings, better browser compatibility, etc.

Jared Smith, WebAIM’s Hierarchy for Motivating Accessibility Change

By shifting left, team velocity was only minimally impacted as we adopted new ways of working. The project resulted in a significant reduction of issues released into production. It also gave a faster turnaround time for discovering issues and addressing them earlier. This avoids the expensive costs of remediation in production environments.

Mock-ups and component designs included AAC annotations to communicate requirements before user stories were finalised.

In JIRA, relevant AAC was added to stories alongside business acceptance criteria. This stimulated questions and collaboration among all roles during backlog grooming. Early discussions about our process helped reduce ambiguity and assumptions. This clarity made it easier to estimate stories with certainty. In turn, stories became easier for our product manager to prioritise and schedule.

Conversations with developers gave us a clear understanding of how the accessibility requirements would benefit people using the application.

QA testers quickly gained confidence and competence in manual accessibility testing procedures. They became efficient and effective at identifying passing results and logging a defect when something failed an AAC check.

Bonus result #

Our new product feature uses accessible tooltips. I discovered gaps in the old AAC 2 Content on hover. So, I wrote a replacement AAC2 based on Hoverable in WCAG 1.4.13: Content on Hover or Focus (Level AA).

Then, I collaborated with Ross Mullen. He reviewed my refinements to the AAC and merged them into the repository. These 10 Accessibility Acceptance Criteria and checklist items are available for you to adopt in your team and to accelerate accessibility efforts in your projects.

1 comment

Comments are closed.